Split testing is aimed to improve user engagement, reduce bounce rates and boost conversion rate eventually. Learn the basics and apply it correctly.

Quick navigation ⤵️

▶ What is A/B testing?

▶ 5 main steps of A / B testing

▶ 7 main rules of A / B testing

▶ 10 useful A / B testing tools

▶ How to A/B test push notifications?

▶ In conclusion

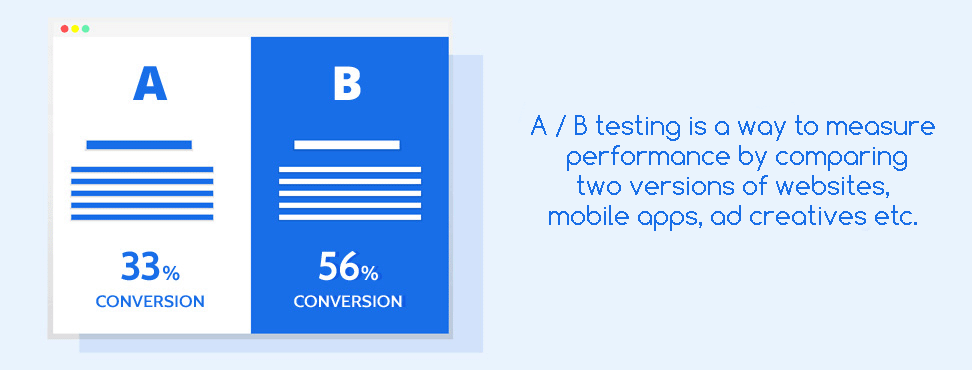

What is A/B testing?

A / B testing is a way to measure performance by comparing two versions of websites, mobile apps, ad creatives etc. So you can find out which version is the best one for your purpose.

Using A/B testing you can gather qualitative and quantitative user insights. The advantage of testing is that this way you can choose the best solutions, and in case of poor results, you can minimize losses.

You can test anything you want: new features, titles, calls to action, additional buttons and blocks, page design, design solutions, rates and discounts, domain name options, and so on. Implementing the changes of the winning variation can help improve an engagement rate, increase lead conversion and business ROI.

A/B tests help with the following tasks:

- Increase the conversion rate.

- Increase the return on investment (ROI).

- Decrease The Bounce Rate

- Reduce the risks in case of changes.

- Improve statistical significance.

- Improve the design.

- Optimize the sales funnel.

Who needs A / B testing:

- Website owners;

- Product managers;

- Marketers;

- Designers;

- Website and mobile app developers;

- Everyone who is working to improve their web resources.

5 main steps of A / B testing

1. Define goals

Start with defining the main goals for your project and make sure that your A / B testing goals align with them. If you choose the wrong landmark, you risk wasting time and money.

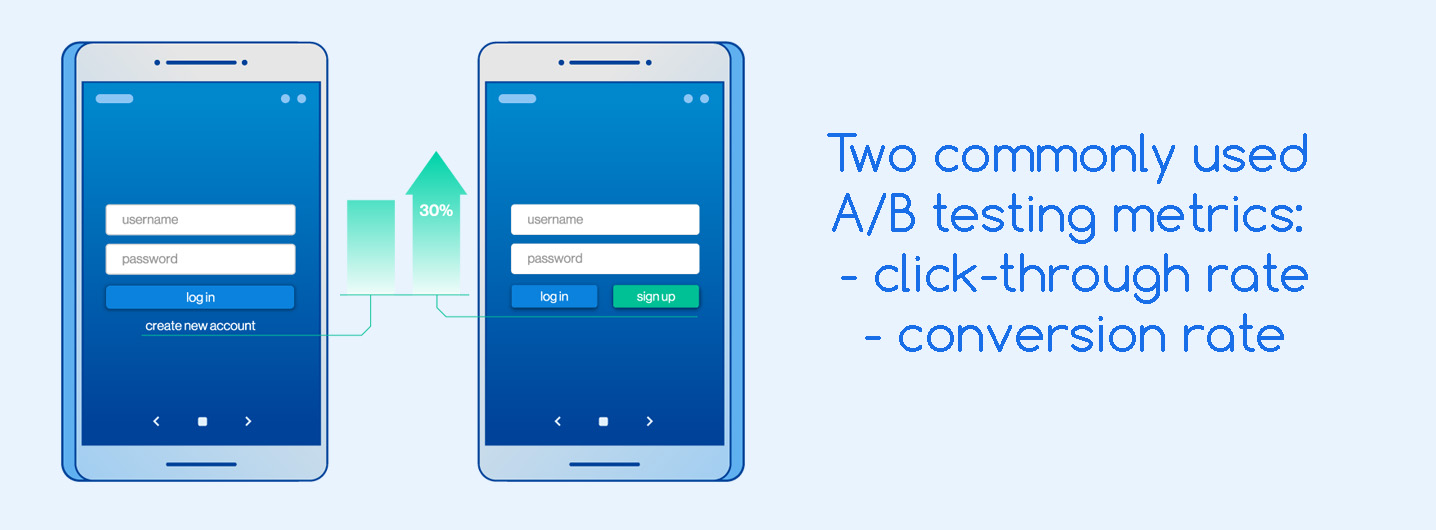

2. Define metrics

Select the metrics by which you will evaluate the test results. This can be: the number of clicks, sales, visitors, revenue etc. For example, if you want to understand whether a new version of the site will be more successful, you need to choose a metric by which you can evaluate the result: conversion rate, click-through rate (CTR) or other indicators.

3. Formulate a hypothesis

A hypothesis is an assumption that you will seek to prove or disprove by collecting the required data. Hypotheses must be multiples of growth, otherwise they are simply not worth your time and effort.

Formulate a hypothesis according to the scheme "If you do this, then something will happen." For example: "If you change the offer on the main page of the site, then the conversion rate will grow from 3% to 7%." This assumption can be both right and wrong. You will determine it through A / B testing.

4. Run an experiment

For example, you need to test a new page on your site.

Option A is the original version of the page, unchanged.

Option B - a new version of the page (with a different design, title or button).

First, divide your users into two equal segments and run an A / B test in which both segments get the same version of the page. You need to make sure that there are no statistically significant differences in the target metrics in both groups.

Next, go to A / B testing: show one half of the users option A, the other half - option B. Then analyze on which of these pages the target action is more often achieved (more orders, subscriptions, purchases, etc.) or the other key improves. metrics.

5. Evaluate the results

A / B testing can give the following results:

— Version A wins or there is no difference between versions. In this case, it is important to understand why the new version is not working as expected and to gather information for the next tests.

— Version B wins. This means that A / B testing has confirmed your hypothesis that the new version is better than the old one.

7 main rules of A / B testing

1. Test only one element. A / B testing is carried out only for one element, and not for several (and even less for the entire page). Each change has its own consequences, so if you change several elements at once, you will not get a specific result for each of them.

2. Divide the audience equally and randomly. One user should be assigned strictly to one segment. At the same time, it is important that the audience in the segments is homogeneous, that is, they have similar characteristics or needs.

3. Run tests at the same time. Take measurements in segments in parallel, in the same period, in order to exclude the impacting of external factors (seasonality, day of the week, time of day, advertising campaigns, etc.).

4. Determine the sample size. It is important to determine how many unique visitors are needed for the A / B testing results to be statistically significant. This can be done using an online calculator.

5. Track the key metric. Keep track of key metrics changes, not forgetting to measure the impact of side metrics. Most often, advertisers use 2 metrics: click-through rate and conversion rate.

6. Determine the duration of testing. The minimum time is a week, even if you have a statistically significant number of visitors in half a day. The fact is that user behavior may differ on different days of the week.

7. Statistical significance. It is important to check the statistical significance. The higher the percentage of statistical confidence, the more confident you can be in the results. In most cases, 95–98% will be sufficient. You can calculate statistical significance using this free tool.

10 useful A / B testing tools

Google Optimize

The service is developed on the basis of Google Analytics. This is where you run tests of your website's content, including multivariate tests and redirect tests.

Evan's Awesome A/B Tools

The service includes a sample size calculator, two-sample T-test, survival curves, chi-square test, Poisson's mean test, survival test.

Driveback Tools

Service for determining sample size and statistical significance.

Optimizely

A tool for web experimentation with additional personalization.

Visual Website Optimizer

A tool with additional personalization and split testing capabilities, as well as the ability to view heat maps.

Convert

A simple A / B testing service with many features and support for personalization.

Taplytics

A platform for conducting A / B tests on mobile devices with analytics.

AB Tasty

This service supports A / B testing and multivariate testing with a low code / no code approach.

Omniconvert

This platform is designed for eCommerce companies looking to become more customer-focused. It allows split testing of web pages, apps and products. You’ll get detailed information about your visitors.

Unbounce

This is a powerful tool for creating landing pages. You can easily create a stunning design with a drag-and-drop builder. In addition, you can customize templates using JavaScript or CSS. Create pages and conduct A / B tests to determine which elements are working and which do not.

Avoid these mistakes in A / B testing

✅ Using too many variations.

✅ Choosing too short time frames.

✅ Running multiple A/B tests at the same time.

✅ Stopping tests too early.

✅ Draw conclusions ahead of time.

How to A/B test push notifications?

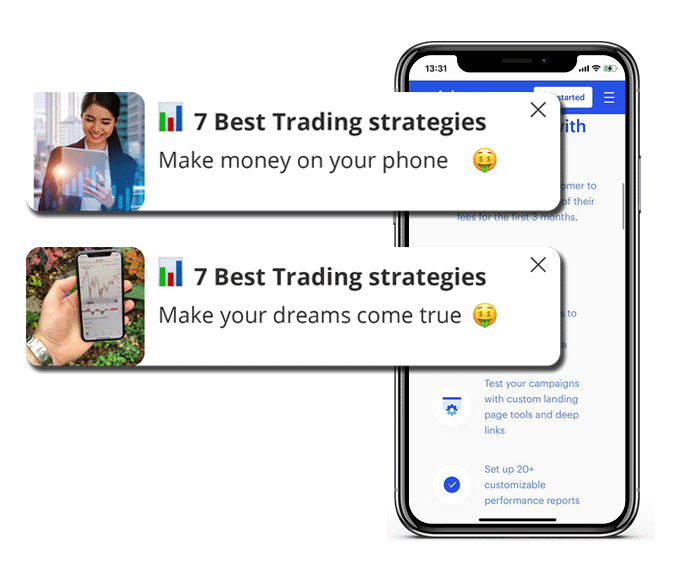

Affiliate marketing is all about testing hypotheses. Icon, title, subtitle and image - elements that can be compared in ad campaigns.

For example, you can A/B test icons. Other elements must remain unchanged. Otherwise, it will no longer be called A / B testing.

In the following example of push notification we changed Title and Subtitle.

And in the next one, on the contrary, we changed only the pictures.

The same approach can be applied to display ads.

How to evaluate A/B test results?

Select the Smart rotator and upload all creatives. Then specify the number of impressions and time. Upon reaching one of these parameters, the Smart rotator will determine the winning creative by CTR.

The number of impressions depends on the offer and user's flow. For example, testing of crypto and gambling offers require more time and money than running sweepstakes (SOI).

100K impressions is the minimum that you should get to evaluate the results. The more impressions you get, the more accurate data you will have.

Regarding the campaign duration - take 168 hours (1 week) for tests.

In conclusion

A / B testing applies to websites, mobile apps, advertising campaigns, e-mail newsletters and any other channel that you want to improve. After collecting the data, it is important to correct the identified errors, strengthen the growth zones and implement successful changes. It is important to include the results obtained in the plan for optimization. The main thing is to do it constantly, and pretty quickly you will see a positive trend!

Reach out to your account managers to get assistance with fine-tuning a campaign.

![[Case Study: +39% ROI] How I ran $200 on Japan Dating In-Page from scratch and with zero KYC. Inside settings from Clickadilla's Head of Accounts.](/_ipx/f_webp&s_280x160/storage/blog_twitter_linkedin_15f9453250/blog_twitter_linkedin_15f9453250.png)